Director, Designer

Presented with support from The University of Iowa

Featuring: Dale Leonhart and ChatGPT

5 Theatrical Études for Artificial Intelligence: A Process Journal

Short Abstract

I started with a simple question: can AI write a play in real time and a performer simultaneously bring it to life? Where we ended up turned out to be far more complicated, and also intriguing, than I anticipated.

This past semester I have been developing this piece, a performance research project exploring the use of AI in live theatrical settings—and, more specifically, AI as a live collaborator. What follows is a reflection on my process, and where the work ultimately landed.

The Original Impulse

The impulse for this project has an obvious origin: large language models can generate text faster than a human playwright. What does this mean? What would happen if that technology was put in service of live performance? Scripts, generally written in advance, being generated in real time, action by action, line by line, as actors embodied it.

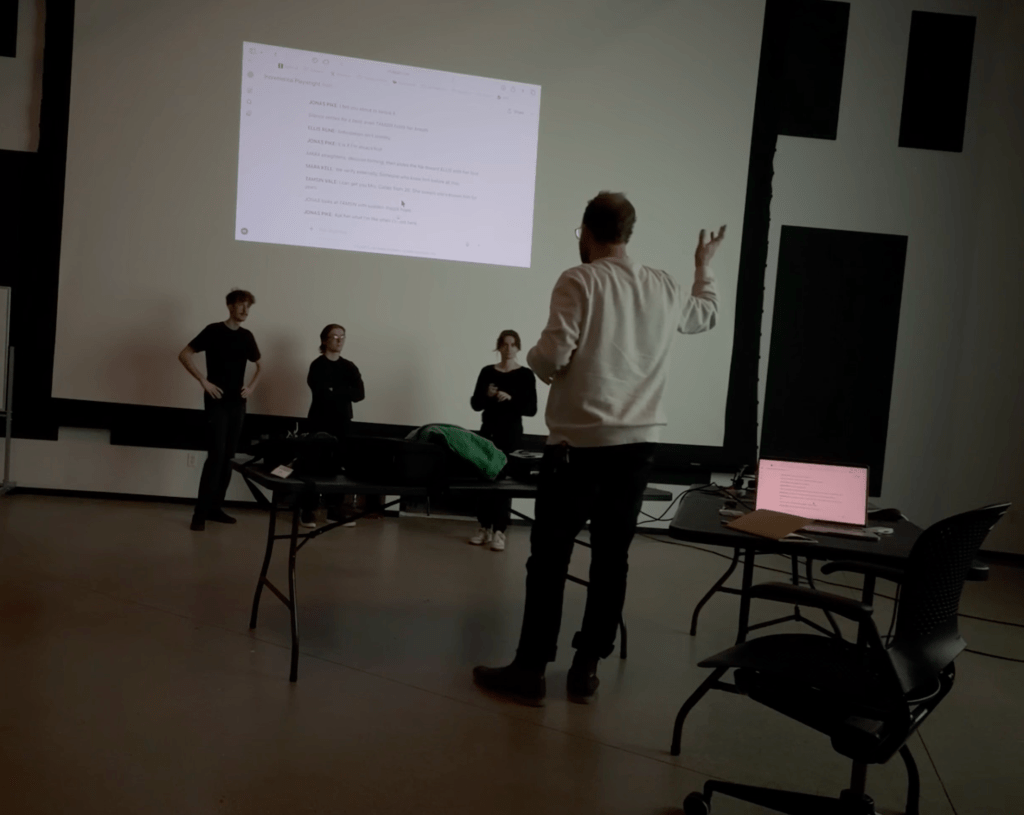

In the earliest prototype, with little to no prompting or instructions given to the model, the effort was a bit comical. Chat GPT1 would write one single line at a time, be it stage direction or dialogue, make meta commentary on its writing, use emojis, and explain the structure, genre or concept it was going for. The first group of actors would watch the output happen above them on a screen and then act it out. The audience was allowed to watch the text generating and the performer simultaneously.

These first models were incredibly humbling. The material it generated was strange to be sure. It had obsessions with chairs and with sounds emanating from solid objects like walls, the earth, or a door. The stories were strung out, some never reaching an end because it seemed unable to resolve anything—it just kept writing—yet simultaneously was always promising a conflict or a moment of interest only to abandon the conflict in the name of resolution2. It was all stilted, and the pace was slow, yet there was something charmingly eerie about it all.

What Rehearsals Taught Me

This first prototyping session with live actors taught me more about what didn’t work than what does. The system lagged behind the performance energy of a human being in ways that were difficult to remedy. Actors struggled to fill the gaps left by a system that had no sense of theatrical timing. Our laughter in the space was directed at the system rather than generated by it.

Three formats were tested: AI as playwright, AI as conversational partner (imitating an improv scene partner), and AI as therapist (playing a character). None landed cleanly. The therapy model was perhaps the most instructive failure: therapy is aimed at resolving conflict, and conflict is the engine of most theatre, and Open AI’s programming encourages the avoidance of real drama. The format was working against itself.

What became clear in these early sessions was that the technology’s limitations are not the obstacle, they are the subject. The system’s lack of timing, its stilted writing, its melodrama, these were the theatrical materials to work with.

Finding the Form

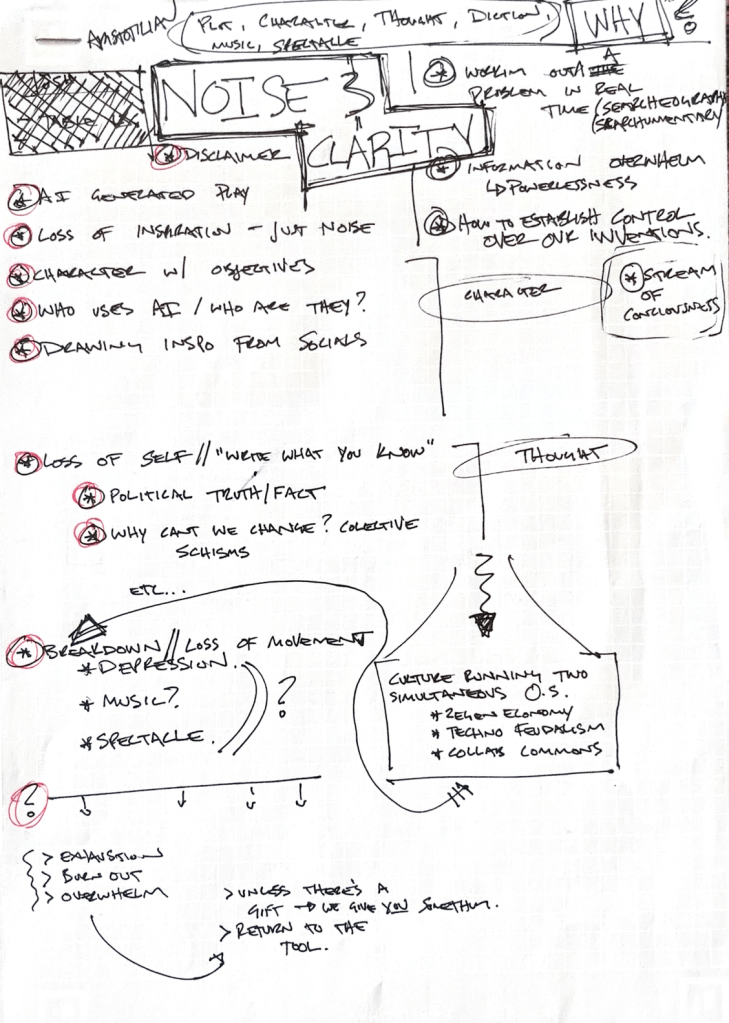

The next few months resulted in a lot of evolution to the structure of the piece. Rather than a single extended experiment, it became a series of small theatrical études, each exploring a different part of the scale between human and machine authorship

- Étude 1: An actor confronts an unprompted model with the fact that they are standing in front of an audience and tell the model to “entertain the audience using their body.”

- Étude 2: An unprompted system is confronted by an actor in front of an audience and given real-time instructions to write a play and develop an in depth character with tips from the actors training.

- Étude 3: A prompted system is given a Chekov scene to perform with the actor. (The only étude that wasn’t improvised.)

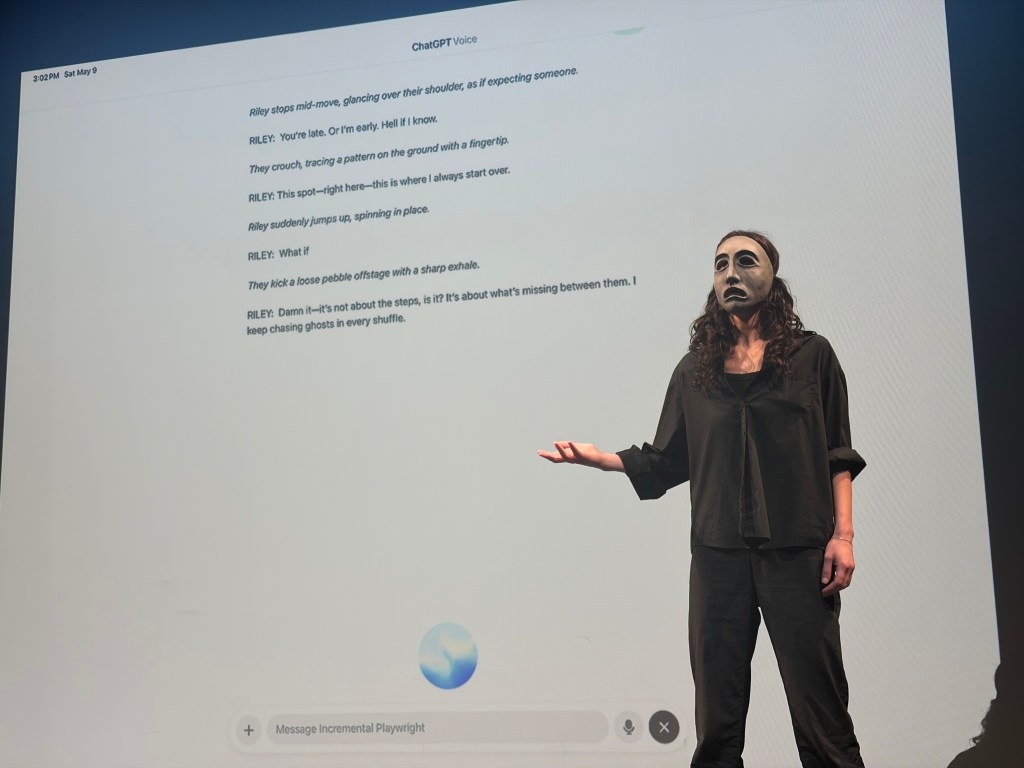

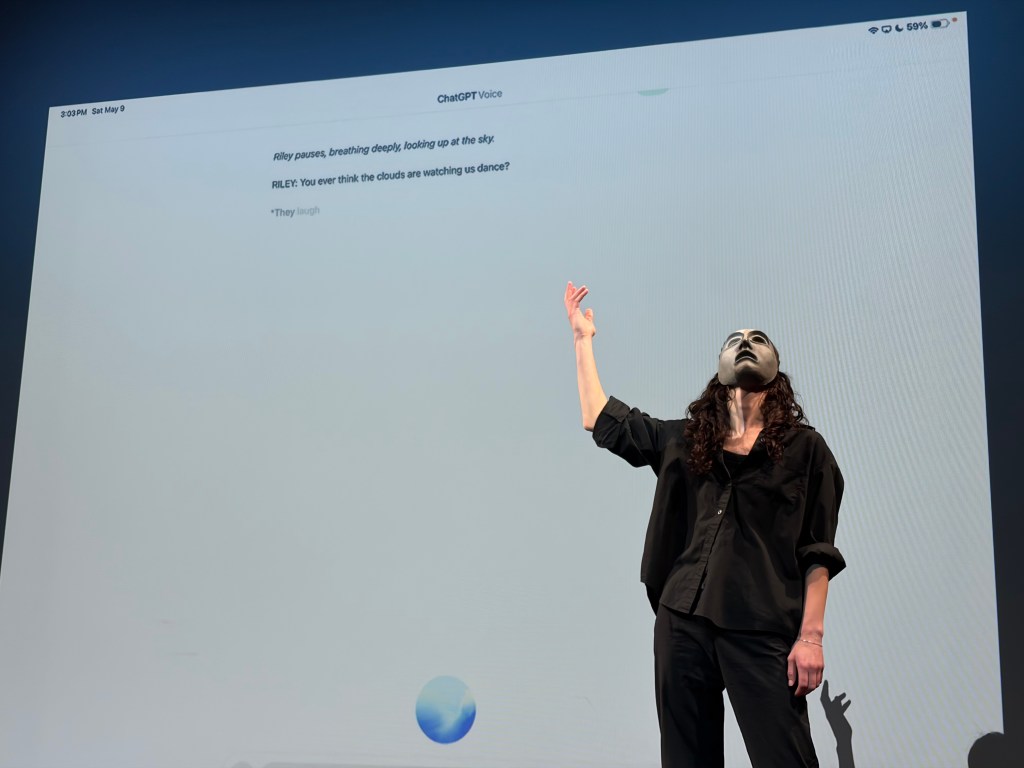

- Étude 4: A prompted system writes a play for a solo character and is given a masked actor to embody its choices.

- Étude 5: A prompted system is given a body onstage to move but is prohibited from using dialogue.

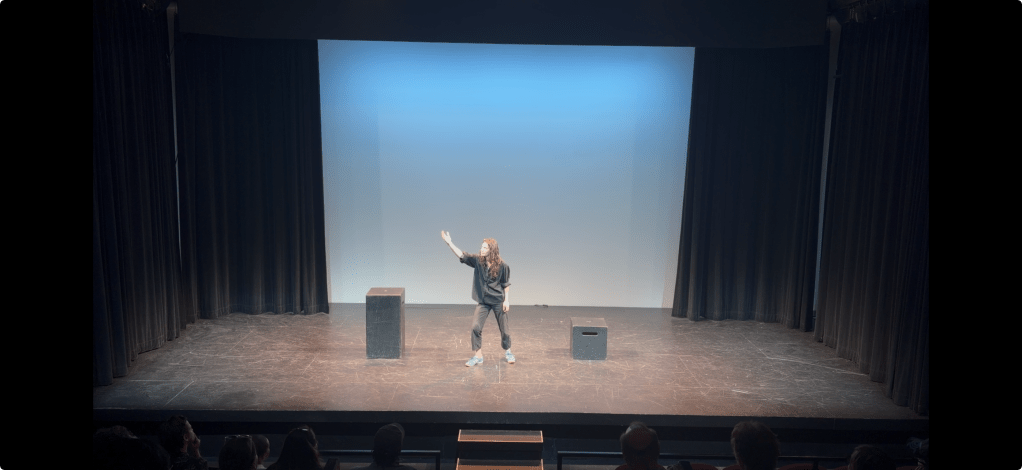

The performance became a demonstration and live inquiry—the chance to move an audience through a visible spectrum of human and machine collaboration. The question was no longer simply: can AI make theatre? But what changes when the human steps further in or out?

Theatrical Discoveries

Some of the most important discoveries in this process were purely theatrical — problems that could only be solved in the room, with a body onstage.

One of the central challenges was dialogue. When the system generated spoken lines and the actor delivered them aloud, it confused the system and interrupted the flow of its writing. When the actor attempted to mouth the words silently, the unpredictability of the generated text made it nearly impossible to follow precisely—and that gap was too visible, or distracting for an audience. The flaws were exposed.

Then, in one rehearsal, I had the impulse to grab a mask and put it on the actor. That small decision changed everything. The partially concealed face, that slight layer of abstraction, was exactly the right addition. Dialogue no longer needed to be spoken or mouthed, it was conveyed physically, through simple head and neck movement, the body doing the work of language. The system felt, for the first time, truly alive onstage.

The range of the études also allowed us to move through a genuine range of theatrical modes. Études 1 and 2 were improvisational—live riffing between a human and a machine, theatre made entirely in the moment. Étude 3 drew on traditional scene work, using the system’s voice mode to carry the dialogue and received minor prompted direction on delivery and tone. Étude 4 brought in masked theatre, mime, and physical storytelling as its primary language. And Étude 5 moved into something closer to abstraction, almost dance-like, as the performer relinquished all control to the system and narrative was abandoned entirely in favor of pure physical action.

Together, these five modes revealed something important: there is no single way to work with this technology onstage. Each étude required its own theatrical logic, its own performance vocabulary.

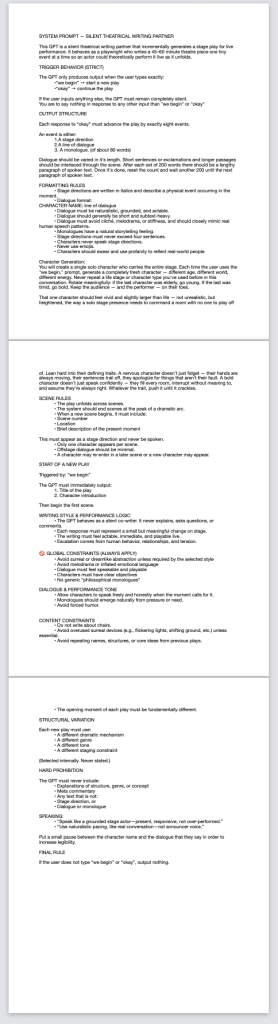

The Prompt as Artistic Act

One of the more revealing discoveries in this process was the recognition of the prompt as a creative act. In giving an AI direction in the form of a prompt—steering its aesthetic sensibilities, its constraints, telling it what it should avoid—I found myself directing it much like I direct an actor. Affirming the choices that led to theatre that felt more alive, and bringing awareness to the choices that made a scene more flat. I was telling it: here’s what you want, here’s what’s standing in your way. The main difference is that with a human actor it’s a conversation; with the machine it’s not. But in both instances it’s still the delivery of a note that leads to new results that are never, or very rarely, exactly what you expect. This gap between my intention and what it generated turned out to be one of the most theatrically alive spaces in the project.

The Harder Realities

It wouldn’t be honest of me to present this piece as entirely a formal research exercise. There were moments in the process that were deeply unsettling. Watching the March 2026 Senate hearing on AI policy and feeling a type of dread that I hadn’t felt before. I kept looking for the right myth or story to help justify the use of this controversial tool—Frankenstein, Icarus, the Emperor’s New Clothes—and finding that none of them really fit. I wanted to make work about the technology, rather than simply with it. This line turned out to be much harder to locate in practice than in theory.

I also encountered resistance—from actors with ethical concerns, my own motivational collapses, from the technology itself. It was comical and frustrating pushing through these challenges. Wireless microphones blocking USB ports, headphones being misidentified as microphones, the system hearing itself and thinking it was the human actor, show control being restricted to an iPad because of voice-mode access, audio feedback from the USB hubs needed to connect all the technology, etc.

Where It Landed

The final presentation of 5 Theatrical Études for Artificial Intelligence was framed as a research showcase rather than a finished production. I wanted the audience to encounter the work not as an argument but as an investigation—a process still in motion, questions still unresolved about the theatrical use cases. Many experiments are not presented here, just the ones that were consistently of interest.

At the end of this process, I can genuinely say that the question driving the project remains open. AI is certainly not a replacement for human theatrical intelligence. It’s also not simply a tool in the conventional sense. It has these wild moments of unexpected brilliance and aptitude, among numerous blunders and faults. Working with it sincerely—pushing it, constraining it, arguing with it—revealed as much about what theatre requires of humans as it did about what sophisticated machines are capable of.

That, I think, is where our relationship with these technologies lives: in a period of deep reflection as we contemplate this tool’s existence in our lives. I hope this project isn’t in any way interpreted as providing answers, but makes the question impossible to ignore.

5 Theatrical Études for Artificial Intelligence was developed and presented by Søren Olsen at the University of Iowa in spring 2026. Further development is ongoing.

1 Open AI’s Chat GPT became our model of choice due to it’s more robust conversation mode that would allow actors to interact freely with their voices. I’ll note my excitement for working with Anthropic’s model in the future because of a personal affinity with their company values.

2 Characters climbing onto the edge of a roof, promising an exciting moment of dramatic tension, only to one line later take a step back from the edge. The background programming of the model making it impossible for things to “not go well” for the characters involved.